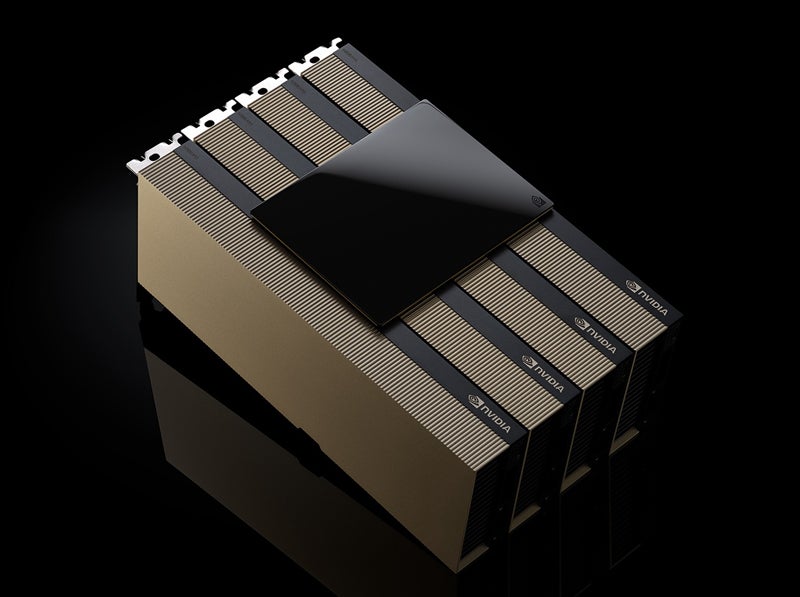

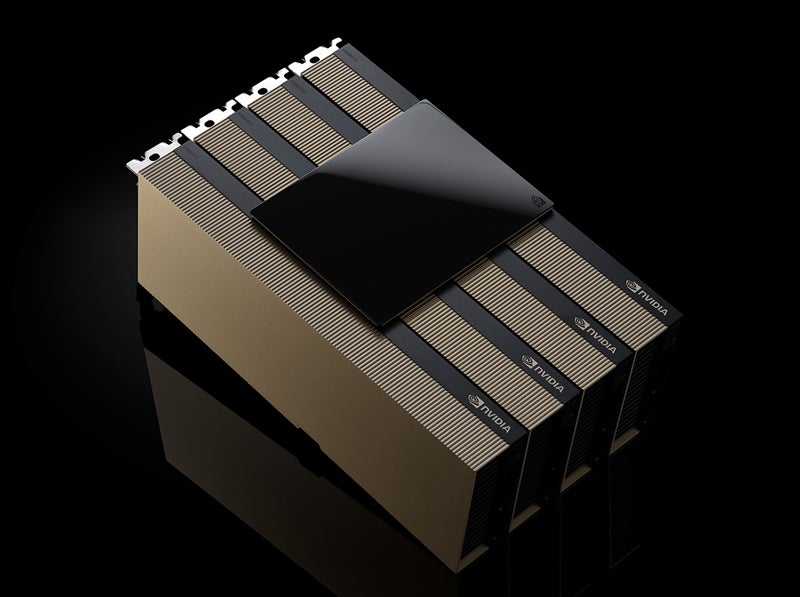

NVIDIA revealed various infrastructure, hardware, and resources for scientific research and enterprise at the International Conference for High Performance Computing, Networking, Storage, and Analysis, held Nov. 17 to Nov. 22 in Atlanta. Key among these announcements was the upcoming general availability of the H200 NVL AI accelerator.

The newest Hopper chip is coming in December

NVIDIA announced at a media briefing on Nov. 14 that platforms built with the H200 NVL PCIe GPU will be available in December 2024. Enterprise customers can refer to an Enterprise Reference Architecture for the H200 NVL. Purchasing the new GPU at an enterprise scale will come with a five-year subscription for the NVIDIA AI Enterprise service.

Dion Harris, NVIDIA’s director of accelerated computing, said at the briefing that the H200 NVL is ideal for data centers with lower power — under 20kW — and air-cooled accelerator rack designs.

“Companies can fine-tune LLMs within a few hours” with the upcoming GPU, Harris said.

H200 NVL shows a 1.5x memory increase and 1.2x bandwidth increase over NVIDIA H100 NVL, the company said.

Dell Technologies, Hewlett Packard Enterprise, Lenovo, and Supermicro will support the new PCIe GPU. It will also appear in platforms from Aivres, ASRock Rack, GIGABYTE, Inventec, MSI, Pegatron, QCT, Wistron, and Wiwynn.

SEE: Companies like Apple are working hard to create a workforce of chip makers.

Grace Blackwell chip rollout proceeding

Harris also emphasized that partners and vendors have the NV GB200 NVL4 (Grace Blackwell) chip in hand.

“The rollout of Blackwell is proceeding smoothly,” he said.

Blackwell chips are sold out through the next year.

Unveiling the Next Phase of Real-Time Omniverse Simulations

In manufacturing, NVIDIA introduced the Omniverse Blueprint for Real-Time CAE Digital Twins, now in early access. This new reference pipeline shows how researchers or organizations can accelerate simulations and real-time visualizations, including real-time virtual wind tunnel testing.

Built on NVIDIA NIM AI microservices, Omniverse Blueprint for Real-Time CAE Digital Twins lets simulations that generally take weeks or months be performed in real time. This capability will be on display at SC’24, where Luminary Cloud will show how it can be leveraged in a fluid dynamics simulation.

“We built Omniverse so that everything can have a digital twin,” Jensen Huang, founder and CEO of NVIDIA, said in a press release.

“By integrating NVIDIA Omniverse Blueprint with Ansys software, we’re enabling our customers to tackle increasingly complex and detailed simulations more quickly and accurately,” said Ajei Gopal, president and CEO of Ansys, in the same press release.

CUDA-X library updates accelerate scientific research

NVIDIA’s CUDA-X libraries help accelerate the real-time simulations. These libraries are also receiving updates targeting scientific research, including changes to CUDA-Q and the release of a new version of cuPyNumeric.

Dynamics simulation functionality will be included in CUDA-Q, NVIDIA’s development platform for building quantum computers. The goal is to perform quantum simulations in practical times — such as an hour instead of a year. Google works with NVIDIA to build representations of their qubits using CUDA-Q, “bringing them closer to the goal of achieving practical, large-scale quantum computing,” Harris said.

NVIDIA also announced the latest cuPyNumeric version, the accelerated scientific research computing library. Designed for scientific settings that often use NumPy programs and run on a CPU-only node, cuPyNumeric lets those projects scale to thousands of GPUs with minimal code changes. It is currently being used in select research institutions.